Introduction to Multimodal Models

In the realm of artificial intelligence and machine learning, multimodal models represent a significant advancement. These models are designed to process and integrate multiple forms of data, such as text, images, and audio, thereby providing a more comprehensive understanding of information. As our world becomes increasingly data-rich, the importance of multimodal models continues to grow.

The Concept of Multimodal Models

At its core, multimodal models aim to leverage various types of data to improve decision-making and enhance the understanding of complex information. For instance, a model that analyzes social media posts can benefit from the text of the posts, accompanying images, and user interactions. By incorporating these different modalities, the model can deliver richer insights and more accurate predictions.

Why Multimodal Models Matter

The need for multimodal models arises from the complexity of human communication and the diverse ways in which we consume information. Humans naturally integrate multiple senses when processing information; for example, we often understand a concept better when we see it alongside a written description. Multimodal models mimic this human capability, making them invaluable in various applications, including healthcare, education, and entertainment.

Key Components of Multimodal Models

To create effective multimodal models, several key components must be considered:

- Data Types: Multimodal models work with different types of data, including images, text, audio, and video. Each data type contributes unique information that can enhance the model’s understanding.

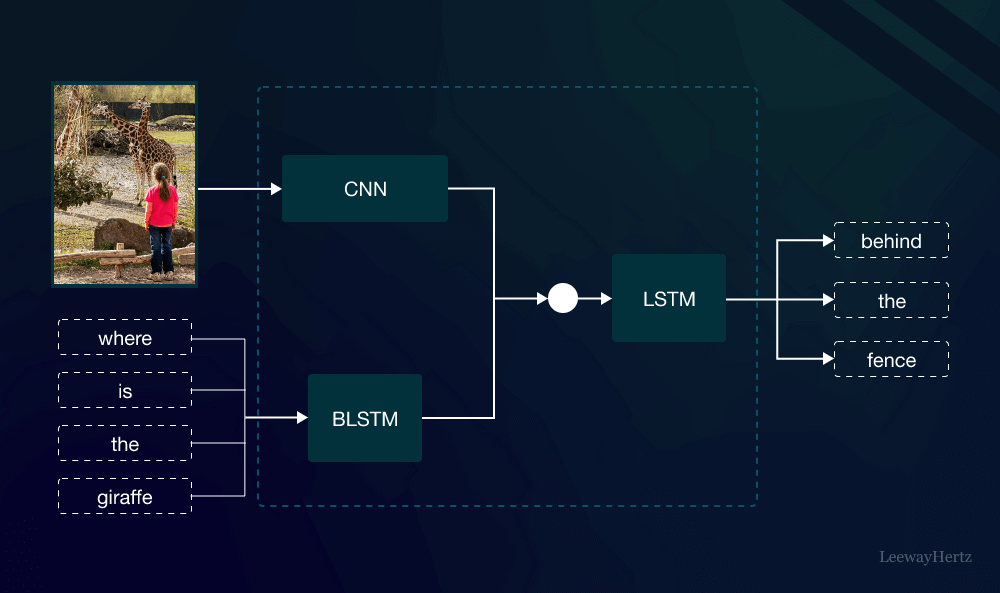

- Integration Techniques: Combining different data types is crucial. Techniques such as feature extraction, representation learning, and fusion methods help integrate the data seamlessly.

- Learning Algorithms: The choice of learning algorithms can significantly impact the performance of multimodal models. Deep learning techniques, including convolutional neural networks (CNNs) for images and recurrent neural networks (RNNs) for text, are commonly used.

- Evaluation Metrics: To measure the effectiveness of multimodal models, appropriate evaluation metrics must be established. Metrics should reflect the model’s ability to accurately process and interpret the integrated data.

Applications of Multimodal Models

Multimodal models have numerous applications across various fields. Some of the most notable include:

1. Healthcare

In healthcare, multimodal models can analyze patient data from multiple sources, including medical images, electronic health records, and genomic data. This integration helps improve diagnostic accuracy and personalized treatment plans.

2. Natural Language Processing

In natural language processing (NLP), multimodal models enhance the understanding of text by incorporating contextual images or audio. This is particularly useful in applications such as chatbots, virtual assistants, and language translation services.

3. Autonomous Vehicles

Multimodal models play a critical role in the development of autonomous vehicles. By integrating data from cameras, LiDAR, radar, and GPS, these models help vehicles better understand their environment and make informed driving decisions.

4. Multimedia Content Creation

In content creation, multimodal models assist in generating rich media, such as videos that combine audio narration with visuals. This capability is valuable in education, marketing, and entertainment.

Challenges in Developing Multimodal Models

Despite their potential, developing multimodal models comes with challenges:

- Data Imbalance: Often, one modality may dominate the data, leading to biased results. Balancing the contributions of different data types is crucial for effective integration.

- Complexity of Integration: Combining data from diverse sources can be technically challenging. Ensuring that the model effectively learns from each modality requires sophisticated algorithms.

- Interpretability: Understanding how multimodal models arrive at their conclusions can be complex. Improving the interpretability of these models is essential for building trust, especially in critical applications like healthcare.

- Computational Resources: Training multimodal models typically requires significant computational power and memory, making them less accessible for smaller organizations.

Future of Multimodal Models

The future of multimodal models looks promising. As technology advances and more data becomes available, the capabilities of these models will continue to expand. Innovations in areas such as transfer learning, where a model trained on one type of data can be adapted for another, will enhance the versatility of multimodal models. Additionally, improvements in computational efficiency will make it easier for researchers and organizations to implement these models in real-world applications.

Conclusion

Multimodal models are transforming the way we interact with data. By integrating various data types, they provide deeper insights and more accurate predictions, making them a vital tool across many industries. As research and development in this field continue to evolve, we can expect multimodal models to play an increasingly important role in shaping the future of artificial intelligence and machine learning. Embracing these models will lead to innovative solutions and a better understanding of the complex world around us.

Leave a comment