Large language models (LLMs) have revolutionized the way we interact with technology, transforming various fields such as natural language processing (NLP), machine learning, and artificial intelligence. In this article, we will delve into the comparison of large language models, exploring their unique features, capabilities, and use cases. By understanding these differences, we can make informed decisions about which model to use for specific applications.

What Are Large Language Models?

Large language models are a subset of artificial intelligence that utilizes deep learning techniques to process and generate human-like text. They are trained on vast amounts of textual data, enabling them to understand context, semantics, and even nuanced human language. The architecture of these models typically consists of neural networks that can predict the next word in a sequence, generate coherent paragraphs, or answer questions based on provided context.

Key Characteristics of Large Language Models

When engaging in the comparison of large language models, several key characteristics come into play. These include the model size, training data, architecture, and specific use cases. Understanding these features can help differentiate between various models and their applications.

Model Size

One of the most significant factors in the comparison of large language models is their size, which is often measured in parameters. Parameters are the internal variables that the model adjusts during training. Generally, larger models with more parameters tend to perform better on a wider range of tasks due to their ability to capture complex patterns in data. However, they also require more computational resources, making them less accessible for smaller organizations or individuals.

Training Data

The quality and quantity of training data significantly impact the performance of large language models. Models trained on diverse and extensive datasets are better equipped to understand various topics and linguistic styles. In the comparison of large language models, it is essential to consider the sources of training data, as bias or limited exposure can lead to suboptimal performance in certain contexts.

Architecture

The architecture of a large language model refers to the design of its neural network, which can include different layers, activation functions, and attention mechanisms. Popular architectures include transformer models, recurrent neural networks (RNNs), and convolutional neural networks (CNNs). The choice of architecture can influence how effectively a model processes language and its ability to handle tasks such as translation, summarization, or sentiment analysis.

Performance Metrics

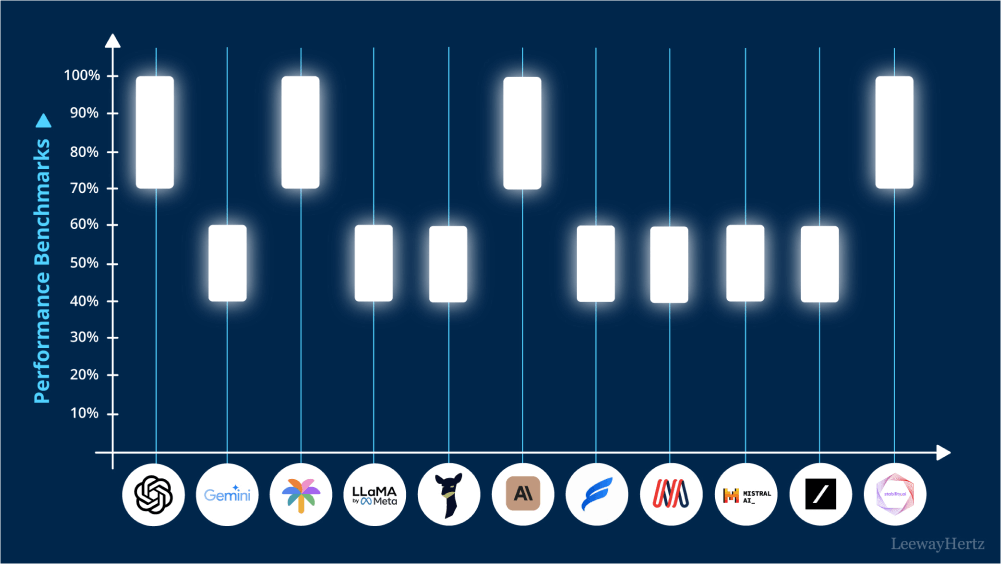

Evaluating large language models involves using various performance metrics to assess their capabilities. Common metrics include perplexity, BLEU score (for translation tasks), and F1 score (for classification tasks). In the comparison of large language models, understanding these metrics is crucial for determining how well a model performs against others in specific tasks.

Perplexity

Perplexity is a measure of how well a probability distribution predicts a sample. In the context of language models, a lower perplexity indicates better performance, as it means the model can more accurately predict the next word in a sequence. Comparing perplexity scores across different models provides insight into their predictive capabilities.

BLEU Score

The BLEU score is commonly used in machine translation to evaluate how closely a model’s output aligns with reference translations. It quantifies the overlap between the generated text and reference text, with higher scores indicating better translation quality. In the comparison of large language models, BLEU scores can highlight strengths in translation tasks.

F1 Score

The F1 score is a measure of a model’s accuracy in classification tasks. It considers both precision (the proportion of true positive results in all positive predictions) and recall (the proportion of true positive results in all actual positive instances). In the comparison of large language models, F1 scores can provide valuable insights into their performance on tasks such as sentiment analysis or named entity recognition.

Use Cases and Applications

The applications of large language models are vast and varied, spanning multiple industries and domains. In the comparison of large language models, examining their use cases can reveal strengths and weaknesses specific to each model.

Text Generation

Large language models excel in generating coherent and contextually relevant text. This capability is widely used in creative writing, content generation, and automated reporting. Some models may be more adept at creative tasks, while others excel in generating technical or factual content.

Sentiment Analysis

Sentiment analysis involves determining the emotional tone behind a body of text. Large language models can effectively analyze reviews, social media posts, and other text forms to assess public opinion. The choice of model can influence the accuracy of sentiment detection, making it an essential factor in the comparison of large language models.

Language Translation

Machine translation has benefited significantly from advancements in large language models. These models can translate text between different languages while maintaining context and meaning. The effectiveness of translation can vary among models, highlighting the importance of understanding their capabilities during the comparison of large language models.

Conclusion

In summary, the comparison of large language models involves evaluating various characteristics such as model size, training data, architecture, and performance metrics. By understanding these differences, we can identify the most suitable models for specific applications, whether it be text generation, sentiment analysis, or language translation. As the field of artificial intelligence continues to evolve, the insights gained from this comparison will play a crucial role in leveraging large language models effectively.

Leave a comment