Introduction to Parameter-efficient Fine-tuning (PEFT)

In recent years, the field of machine learning has seen tremendous advancements. One such breakthrough is Parameter-efficient Fine-tuning (PEFT). This innovative approach addresses the challenge of efficiently fine-tuning large pre-trained models for specific tasks. In this article, we will explore what Parameter-efficient Fine-tuning (PEFT) is, its benefits, and its applications, providing a clear and straightforward understanding of this cutting-edge technology.

What is Parameter-efficient Fine-tuning (PEFT)?

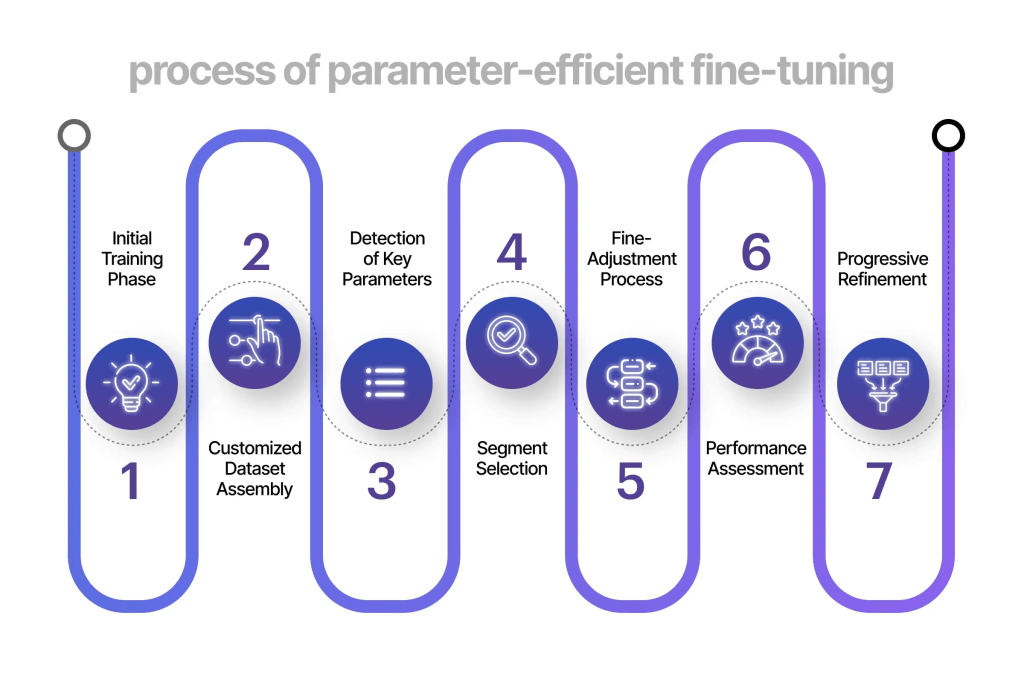

Parameter-efficient Fine-tuning (PEFT) is a method used to adapt pre-trained models to new tasks without requiring a significant number of additional parameters. Traditional fine-tuning methods often necessitate extensive computational resources and large datasets, which can be both time-consuming and costly. PEFT, on the other hand, focuses on fine-tuning a smaller subset of the model’s parameters, making the process more efficient.

In essence, PEFT aims to retain the valuable knowledge learned during the initial training phase while adapting the model to perform well on specific tasks. By only fine-tuning a small fraction of the parameters, PEFT reduces the computational burden and allows for faster and more efficient training.

Benefits of Parameter-efficient Fine-tuning (PEFT)

Reduced Computational Resources

One of the primary advantages of Parameter-efficient Fine-tuning (PEFT) is its ability to significantly reduce the computational resources required for training. Traditional fine-tuning methods often demand substantial GPU power and memory. PEFT, by focusing on a smaller subset of parameters, lessens this demand, making it more accessible for researchers and organizations with limited resources.

Faster Training Times

PEFT enables faster training times compared to conventional methods. Since only a small portion of the model’s parameters are being fine-tuned, the overall training process becomes quicker. This speed advantage is particularly beneficial when working with large-scale models or when rapid iteration and experimentation are required.

Cost Efficiency

The reduction in computational resources and faster training times directly translate to cost savings. For businesses and researchers, the ability to fine-tune models without incurring exorbitant costs is a significant advantage. Parameter-efficient Fine-tuning (PEFT) makes state-of-the-art machine learning models more accessible to a broader audience.

Improved Generalization

Parameter-efficient Fine-tuning (PEFT) has been shown to improve the generalization capabilities of models. By fine-tuning only a subset of parameters, the model retains the robustness and general knowledge acquired during the pre-training phase. This balance helps in achieving better performance on specific tasks without overfitting.

Applications of Parameter-efficient Fine-tuning (PEFT)

Natural Language Processing (NLP)

In the realm of Natural Language Processing (NLP), Parameter-efficient Fine-tuning (PEFT) has demonstrated remarkable results. Large language models, such as GPT-3 and BERT, benefit from PEFT by being adapted to perform specific tasks like sentiment analysis, text summarization, and machine translation more efficiently. The ability to fine-tune these models without extensive computational resources opens up new possibilities for NLP applications.

Computer Vision

Parameter-efficient Fine-tuning (PEFT) is also making waves in computer vision. Pre-trained models like ResNet and EfficientNet can be fine-tuned for tasks such as image classification, object detection, and segmentation. By leveraging PEFT, these models can be adapted to new datasets and tasks with minimal additional computational overhead, leading to faster and more efficient deployment in real-world applications.

Healthcare

The healthcare industry stands to benefit significantly from Parameter-efficient Fine-tuning (PEFT). Medical imaging models, for instance, can be fine-tuned to detect specific diseases or anomalies in X-rays, MRIs, and other medical images. The efficiency of PEFT ensures that these critical models can be updated and deployed rapidly, contributing to improved patient outcomes and more efficient healthcare delivery.

Autonomous Vehicles

In the domain of autonomous vehicles, Parameter-efficient Fine-tuning (PEFT) plays a crucial role in adapting pre-trained models to different environments and scenarios. Whether it’s for object detection, path planning, or sensor fusion, PEFT allows for quick adaptation and fine-tuning, ensuring that autonomous systems can operate safely and effectively in diverse conditions.

Future of Parameter-efficient Fine-tuning (PEFT)

The future of Parameter-efficient Fine-tuning (PEFT) looks promising. As machine learning models continue to grow in size and complexity, the need for efficient fine-tuning methods will only increase. Researchers are actively exploring new techniques to further optimize PEFT, making it even more accessible and effective.

Moreover, the integration of PEFT with other advancements in machine learning, such as transfer learning and meta-learning, holds great potential. By combining these approaches, we can create models that are not only highly efficient but also capable of rapidly adapting to new tasks and environments.

Conclusion

Parameter-efficient Fine-tuning (PEFT) represents a significant advancement in the field of machine learning. By focusing on fine-tuning a smaller subset of parameters, PEFT offers numerous benefits, including reduced computational resources, faster training times, cost efficiency, and improved generalization. Its applications span various domains, from natural language processing to healthcare and autonomous vehicles. As the technology continues to evolve, Parameter-efficient Fine-tuning (PEFT) is set to play a pivotal role in shaping the future of machine learning, making powerful models more accessible and efficient for a wide range of applications.

Leave a comment