Introduction

In the realm of machine learning, the development of accurate predictive models is paramount. However, creating a model is only the first step; ensuring its reliability and generalizability is equally crucial. Model validation serves as a cornerstone in this process, providing the means to assess a model’s performance and robustness. This article delves into the significance of model validation in machine learning, exploring various techniques, with a focus on cross-validation.

Understanding Model Validation in Machine Learning

- The Essence of Model Validation Model validation in machine learning is the process of evaluating a machine learning model’s performance to ensure its effectiveness in making predictions on new, unseen data. The goal is to assess how well a model generalizes to real-world scenarios, beyond the data it was trained on. Without proper validation, a model may suffer from overfitting, where it performs well on the training data but fails to generalize to new instances, or underfitting, where the model is too simplistic and fails to capture the underlying patterns in the data.

- Importance of Model Validation

- Generalization Assessment: Model validation provides insights into how well a model is likely to perform on new, unseen data. This is crucial for deploying models in real-world applications, where the ability to make accurate predictions on diverse data is essential.

- Identifying Overfitting and Underfitting: Validation helps detect overfitting or underfitting issues early in the model development process. Overfit models may capture noise in the training data, while underfit models may fail to capture important patterns. Validation allows for adjustments to improve the model’s performance.

- Model Comparison: Validation enables the comparison of different models, helping data scientists and machine learning practitioners select the best-performing model for a given task.

Model Validation Techniques

- Holdout Validation Holdout validation is one of the simplest techniques, involving splitting the dataset into two parts: a training set used to train the model and a separate validation set used to assess performance. While straightforward, this method can be sensitive to the initial random split, and the model’s performance may vary based on the chosen data subset.

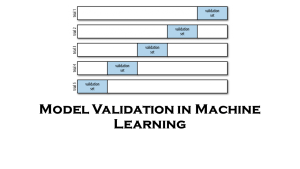

- K-Fold Cross-Validation Cross-validation is a more robust technique that mitigates the sensitivity to a single data split. K-Fold Cross-Validation involves dividing the dataset into ‘K’ folds or subsets. The model is trained on K-1 folds and validated on the remaining fold, and this process is repeated K times, with each fold serving as the validation set exactly once. The performance metrics are then averaged over the K iterations to obtain a more reliable estimate of the model’s performance.

- Leave-One-Out Cross-Validation (LOOCV) LOOCV is an extreme case of K-Fold Cross-Validation where K is set equal to the number of instances in the dataset. This method provides a more accurate assessment of model performance, but it can be computationally expensive, especially for large datasets.

- Stratified Cross-Validation In classification tasks with imbalanced class distribution, stratified cross-validation ensures that each fold maintains the same class distribution as the original dataset. This prevents biased evaluation and provides a more accurate representation of the model’s performance.

Best Practices in Model Validation

- Randomization Randomize the data before splitting to avoid any inherent order or patterns in the dataset influencing the model’s performance. This is particularly important when using holdout validation or in scenarios where the data may be ordered.

- Evaluation Metrics Choose appropriate evaluation metrics based on the nature of the task (classification, regression, etc.). Common metrics include accuracy, precision, recall, F1 score for classification, and mean squared error for regression. Select metrics that align with the specific goals of the machine learning application.

- Regularization Techniques Implement regularization techniques, such as dropout or L1/L2 regularization, to prevent overfitting. Regularization helps penalize complex models and encourages the learning of more generalizable patterns.

- Cross-Validation for Hyperparameter Tuning Utilize cross-validation for hyperparameter tuning to ensure that the model’s performance is assessed across a range of parameter values. This helps in selecting the optimal hyperparameters that generalize well to unseen data.

Conclusion

In the ever-evolving landscape of machine learning, model validation stands as a fundamental practice to ensure the reliability and generalizability of predictive models. Techniques such as holdout validation, K-Fold Cross-Validation, and LOOCV play a pivotal role in assessing a model’s performance across various scenarios. By implementing best practices in model validation, data scientists and machine learning practitioners can build robust models that excel not only on training data but also in real-world applications, making significant strides toward achieving the full potential of machine learning technologies.

Leave a comment